Early concept

Early Concept

Always With You

Smarter. Faster. More Human.

Aura is a Reseach Driven Prototype which aims to develop the less socially disruptive technolgy .

Always Private

Always With you

Always for you

Always for you

Always for you

We carry supercomputers that make us lonelier. Assistants that don't assist. Companions that don't know us. Intelligence without empathy .

Something's missing.

SOHAM DATTA

We carry supercomputers that make us lonelier. Assistants that don't assist. Companions that don't know us. Intelligence without empathy .

Something's missing.

SOHAM DATTA

We carry supercomputers that make us lonelier. Assistants that don't assist. Companions that don't know us. Intelligence without empathy .

Something's missing.

SOHAM DATTA

How It Works

ENGINE

How do you fit memory in aura ? Here's our research approach.

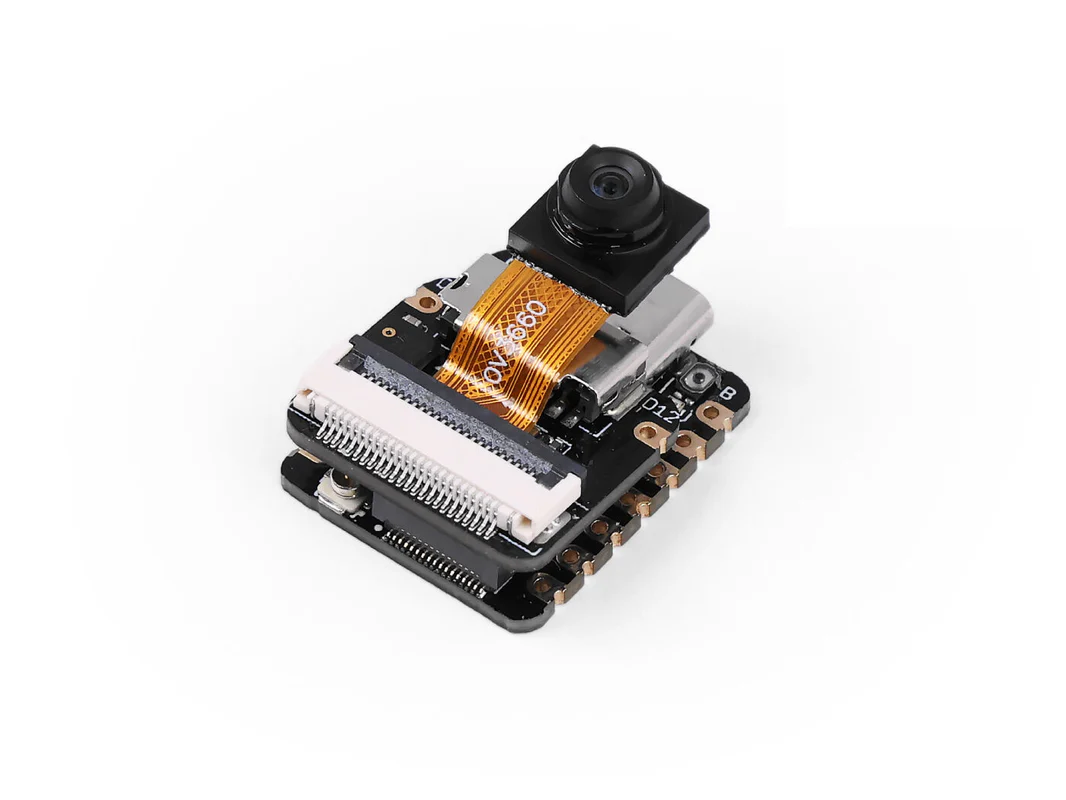

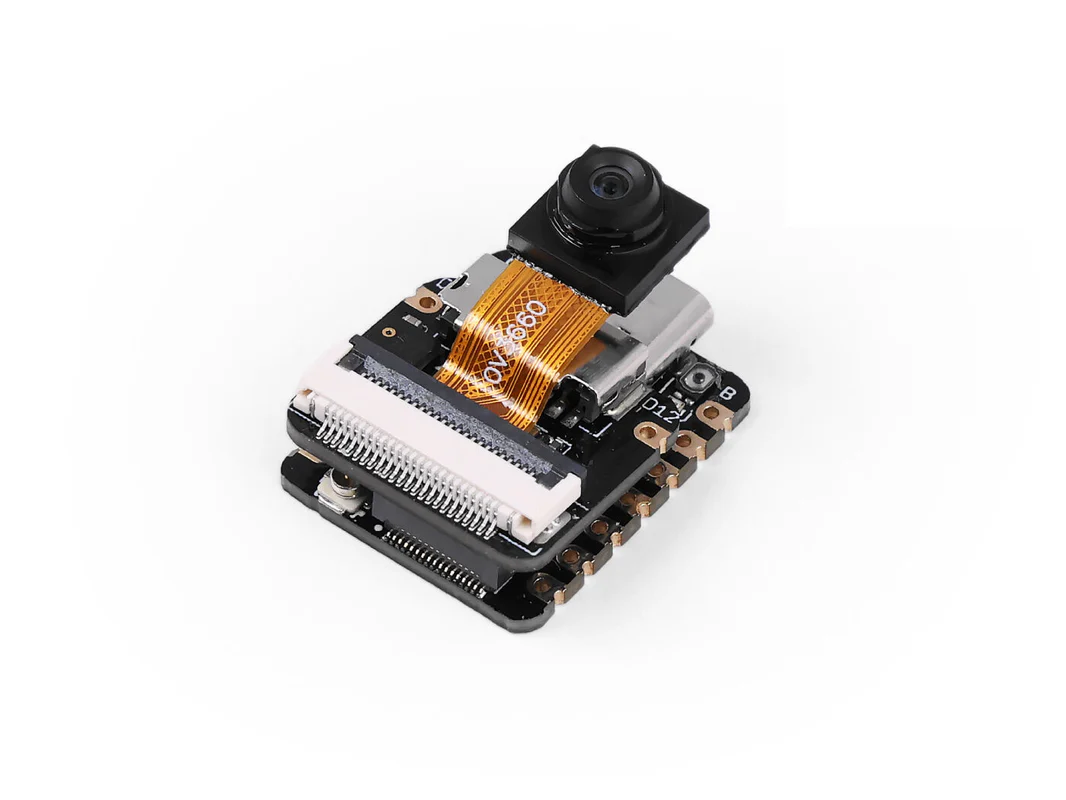

Engine ESP32 S3 Sence

Compact, wearable form factor powered by ESP32 S3 sencefor low-latency inference (<500ms). Designed to operate silently and efficiently, with edge processing for privacy.

Sensor input (mic array)

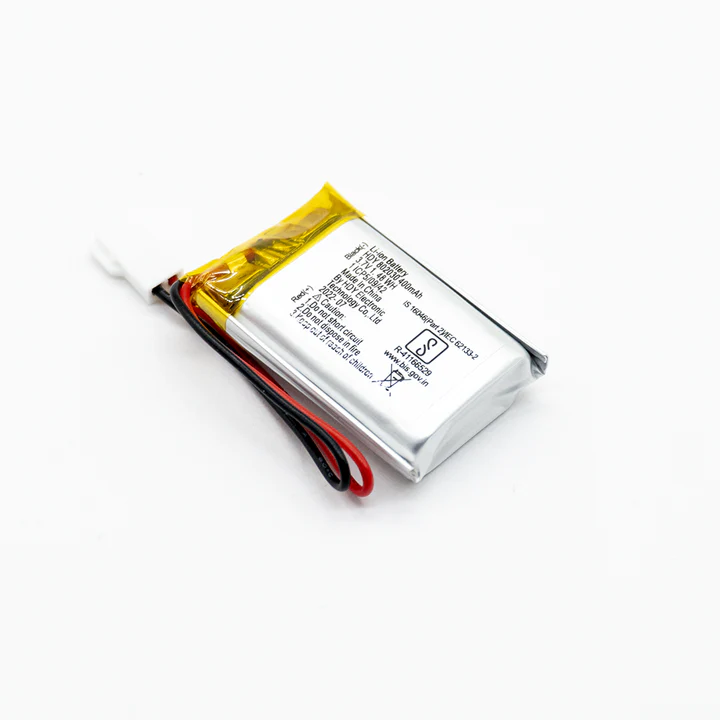

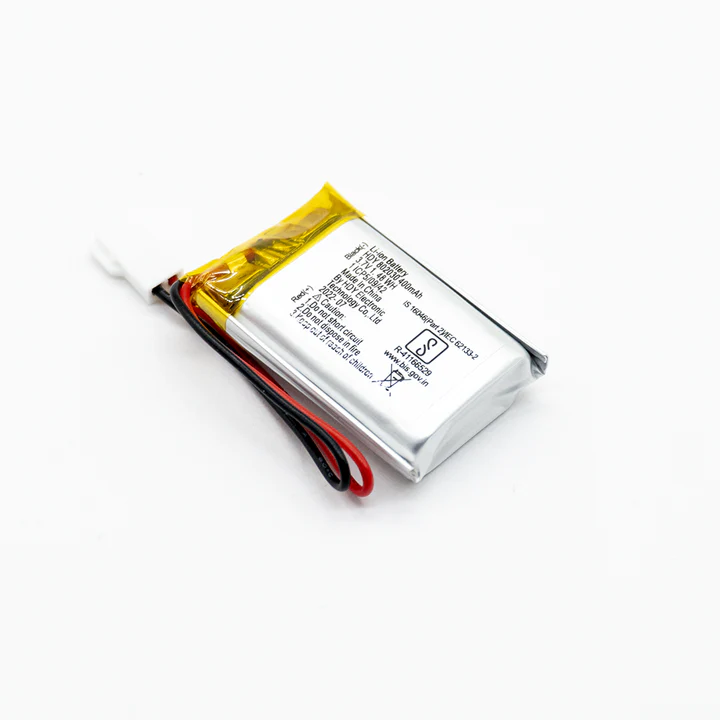

Battery

Camera

Speaker

Engine ESP32 S3 Sence

Compact, wearable form factor powered by ESP32 S3 sencefor low-latency inference (<500ms). Designed to operate silently and efficiently, with edge processing for privacy.

Sensor input (mic array)

Battery

Camera

Speaker

Early Concept

It's about making us more human .

Current Paradigm

Cloud based AI

Task-oriented

Privacy trade-offs

Limits you

The Shift

Edge-native AI

Emotion-aware

Privacy-preserving

Changing

Aura's Approach

On-device processing

Real-time emotion detection

Zero data collection

Mindful presence

Control

It's about making us more human .

Current Paradigm

Cloud based AI

Task-oriented

Privacy trade-offs

Limits you

The Shift

Edge-native AI

Emotion-aware

Privacy-preserving

Changing

Aura's Approach

On-device processing

Real-time emotion detection

Zero data collection

Mindful presence

Control

It's about making us more human .

Current Paradigm

Cloud based AI

Task-oriented

Privacy trade-offs

Limits you

The Shift

Edge-native AI

Emotion-aware

Privacy-preserving

Changing

Aura's Approach

On-device processing

Real-time emotion detection

Zero data collection

Mindful presence

Control

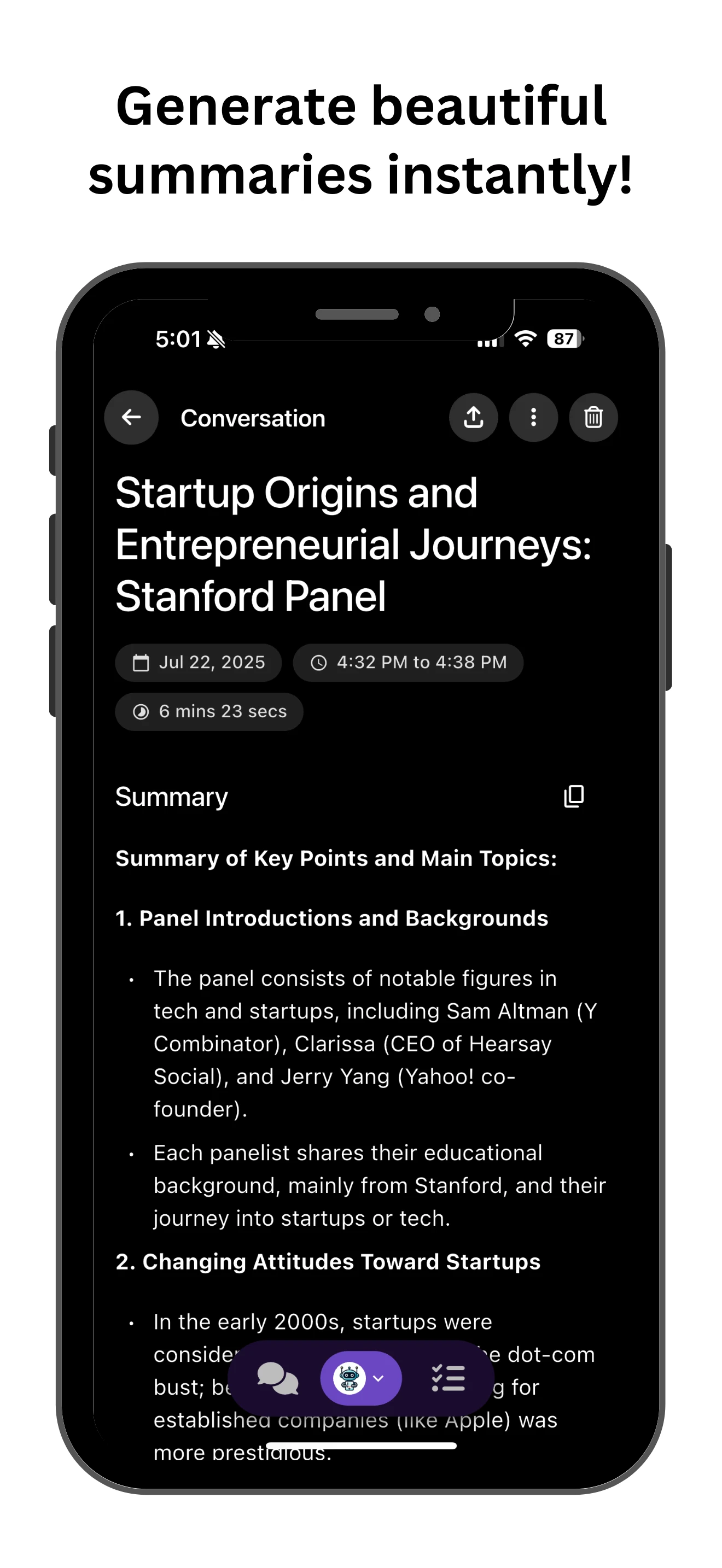

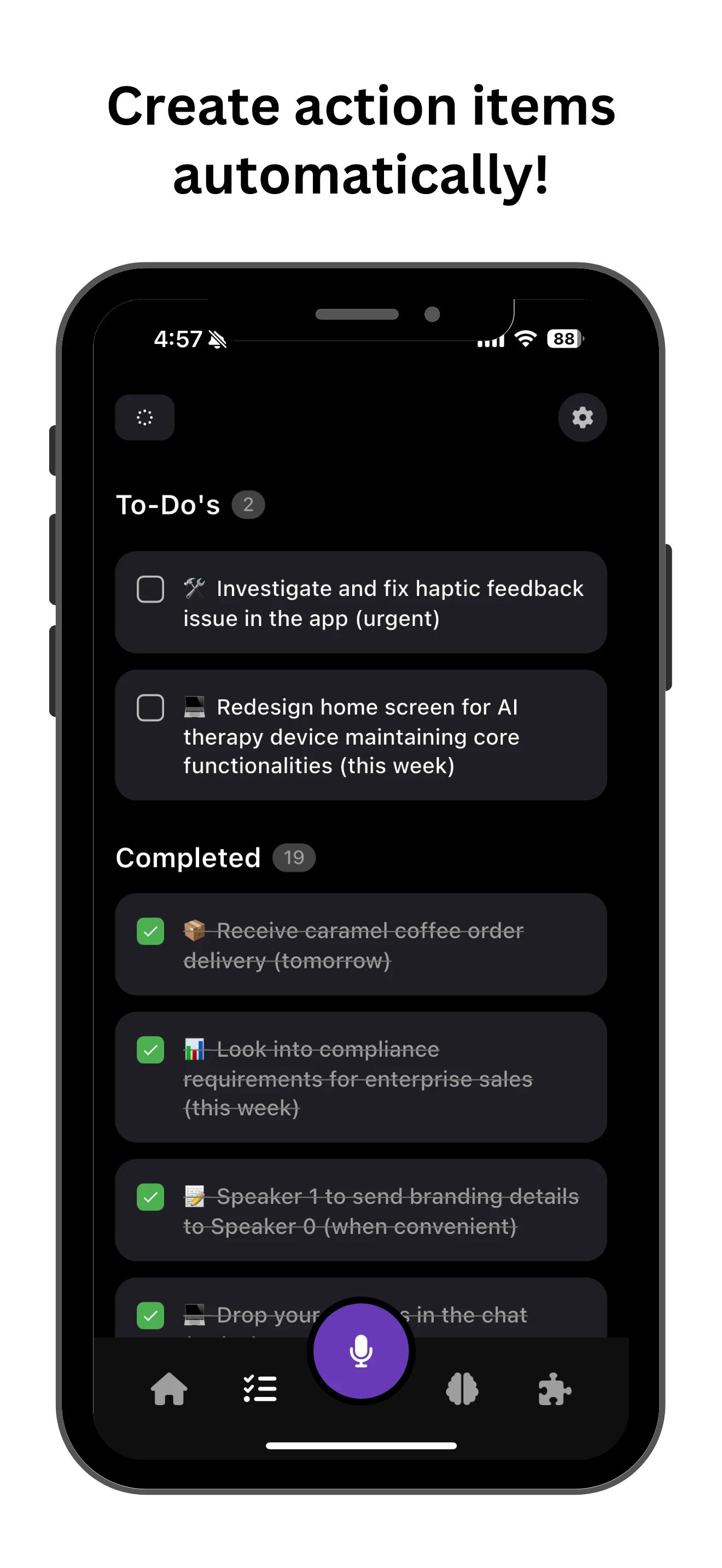

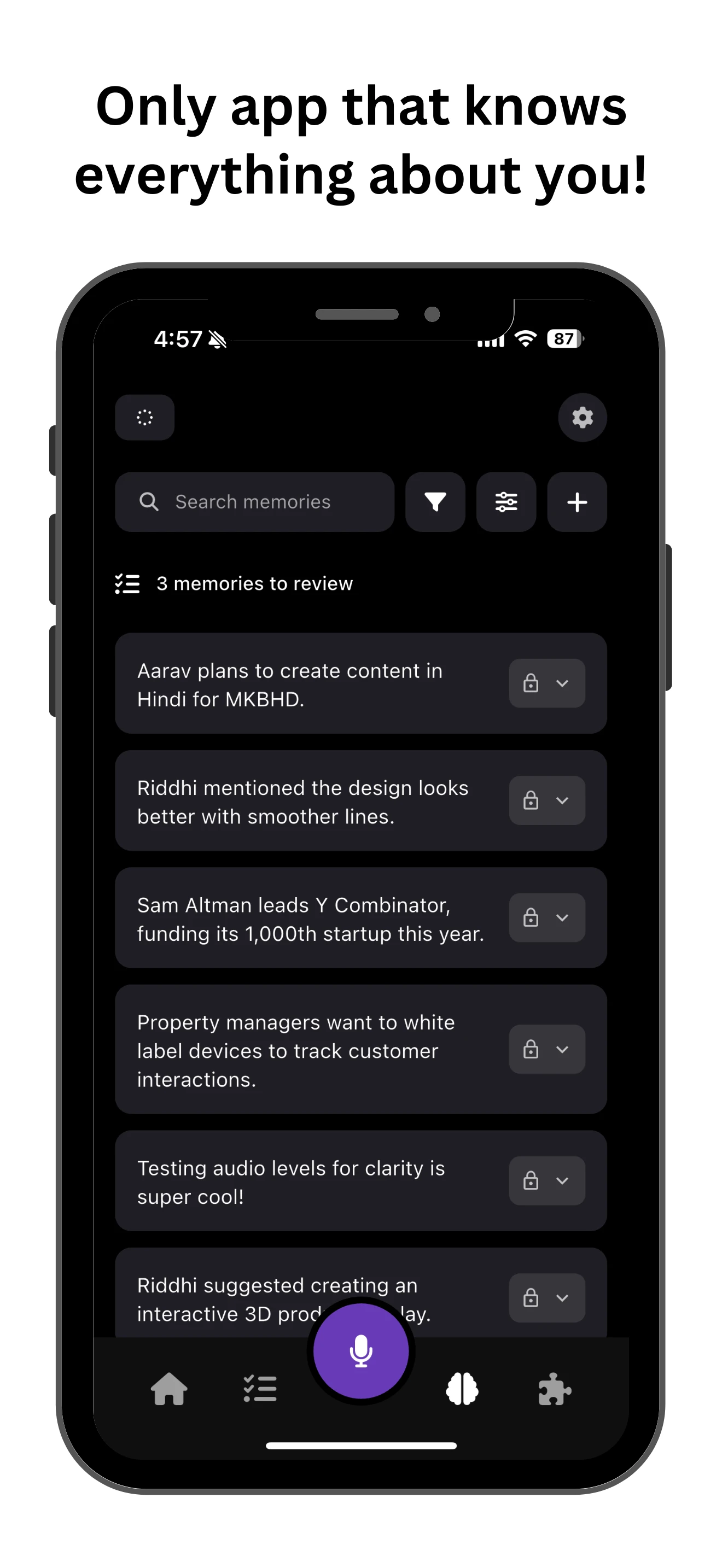

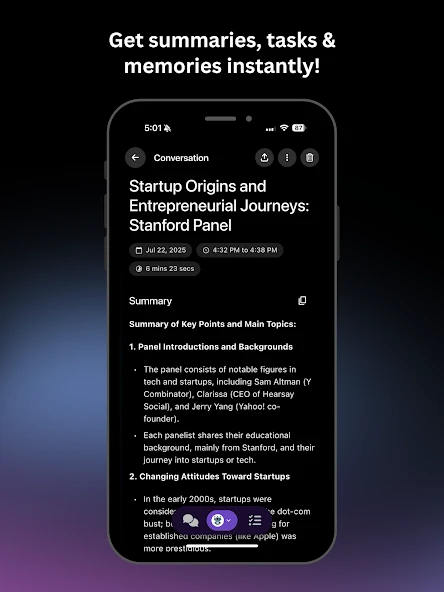

AI in Action

Software

Discover how powerful smart features turn every action into lasting progress.

R & D

RESEARCH & DEVELOPMENT

How aura is getting shaped

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

Multimodal Intelligence

How does AI process what you see AND hear simultaneously?

Dual-stream architecture on ESP32-S3. Audio-visual fusion at 200ms latency.

85% context accuracy in field tests

1/3

Privacy-First

Can AI be personal without the cloud?

Edge-first processing. Quantized models run locally. Zero telemetry by design.

100% offline mode available

2/3

Battery LifeBattery Life

8+ hours on a wearable AI device?

Deep sleep between captures. Adaptive duty cycling drops power to <0.1mA.

may vary according to usage

3/3

FAQ

Frequently Asked Questions

AURA is still in research and early design exploration.

Here’s what we’re building, testing, and imagining for the future.

What is Aura ?

Aura is an experimental concept exploring emotional intelligence in everyday AI — a wearable system designed to understand tone, mood, and context locally on-device.

Why is even Aura ?

What stage is Aura in right now?

Does Aura rerord or share my data?

Who is building Aura?

What’s the long-term vision for Aura?

Contribute in Reseach

We I'll be Happy to work with you